Roman J. Arora & Robert J. Marzano

(September 2023)

IES Practice Guides

The What Works Clearing House (WWC) was established in 2002 to be a central and trusted source of scientific evidence for best practices in education (Wood, 2017). The WWC database is housed within the Institute of Education Sciences (IES) which is a component of the U.S. Department of Education.

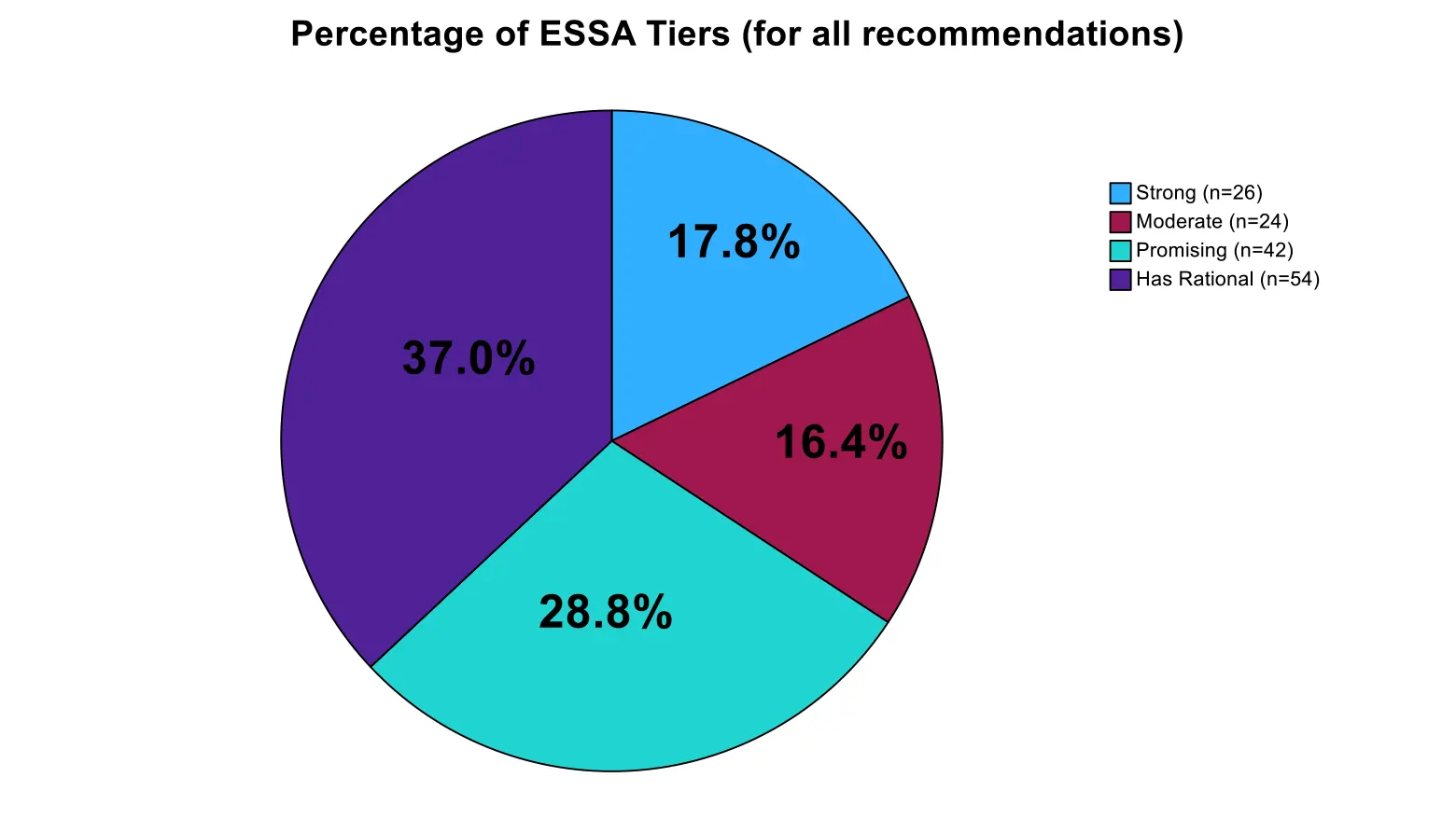

One of the primary tools the WWC employs to make recommendations regarding best practices to educators is an extensive set of Practice Guides that translate research into practical suggestions. Each practice guide contains from 3 to 9 recommendations. To date there are 29 practice guides that involve 146 separate recommendations. To rate these recommendations IES uses three levels of evidence: strong, moderate, and minimal. In addition, in 2015, when the Every Student Succeeds Act (ESSA) was placed into law, another ranking system was developed that involves four levels or Tiers of evidence: Tier I: strong, Tier2 moderate, Tier 3 promising, and Tier 4: demonstrates a rationale (REL Midwest. Regional Educational Laboratory at American Institute for Research, 2023). This four-Tiered approach was designed in part to make research recommendations more fine grained and, therefore, more interpretable by educators for their site specific needs.

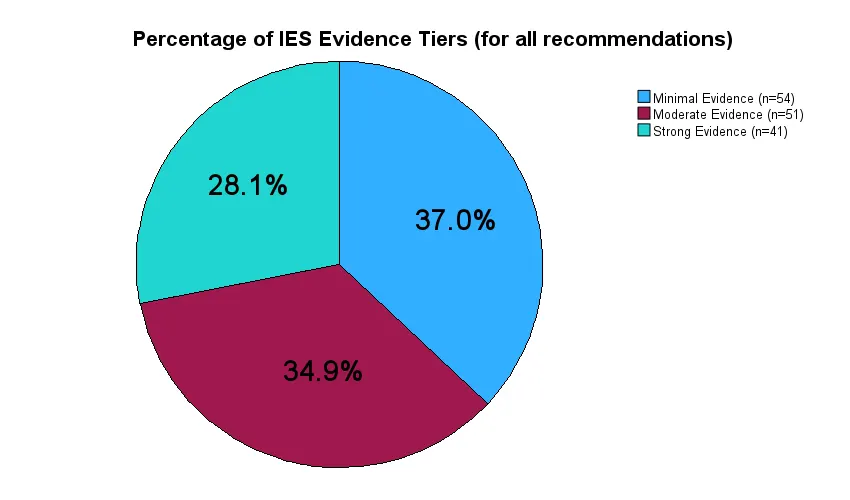

The present study sought to determine the distribution of levels of evidence across the various recommendations as well as the relationship between the two systems of ranking, IES and ESSA. Figure 1 depicts the distribution of rankings across the 146 recommendations for the IES ranking system.

Figure 1: IES System Rankings

As depicted in Figure 1, the IES ranking category into which most recommendations fell was minimal evidence, with 54 of the 146 recommendations, or 37 percent, falling into this category. The category into which the fewest recommendations fell was strong evidence, with 41 of the 146 recommendations, or 28.1 percent, falling into this category. Figure 2 depicts the distribution of rankings using the ESSA system.

Figure 2 shows that the ESSA ranking category into which most recommendations fell was has rational, with 54 of the 146 recommendations, or 37 percent, falling into this category. The category into which the fewest recommendations fell was moderate evidence, with 24 of the 146 recommendations, or 16.4 percent, falling into this category. Interestingly, both the IES and ESSA systems had 37% (n= 54) in their lowest category of ranks. One might conclude that relative to the lowest category of research evidence, the IES and ESSA systems share the same characteristics in the minds of the raters who use them. But the ESSA system divides the recommendations above that lowest category into more detailed categories in the minds of raters.

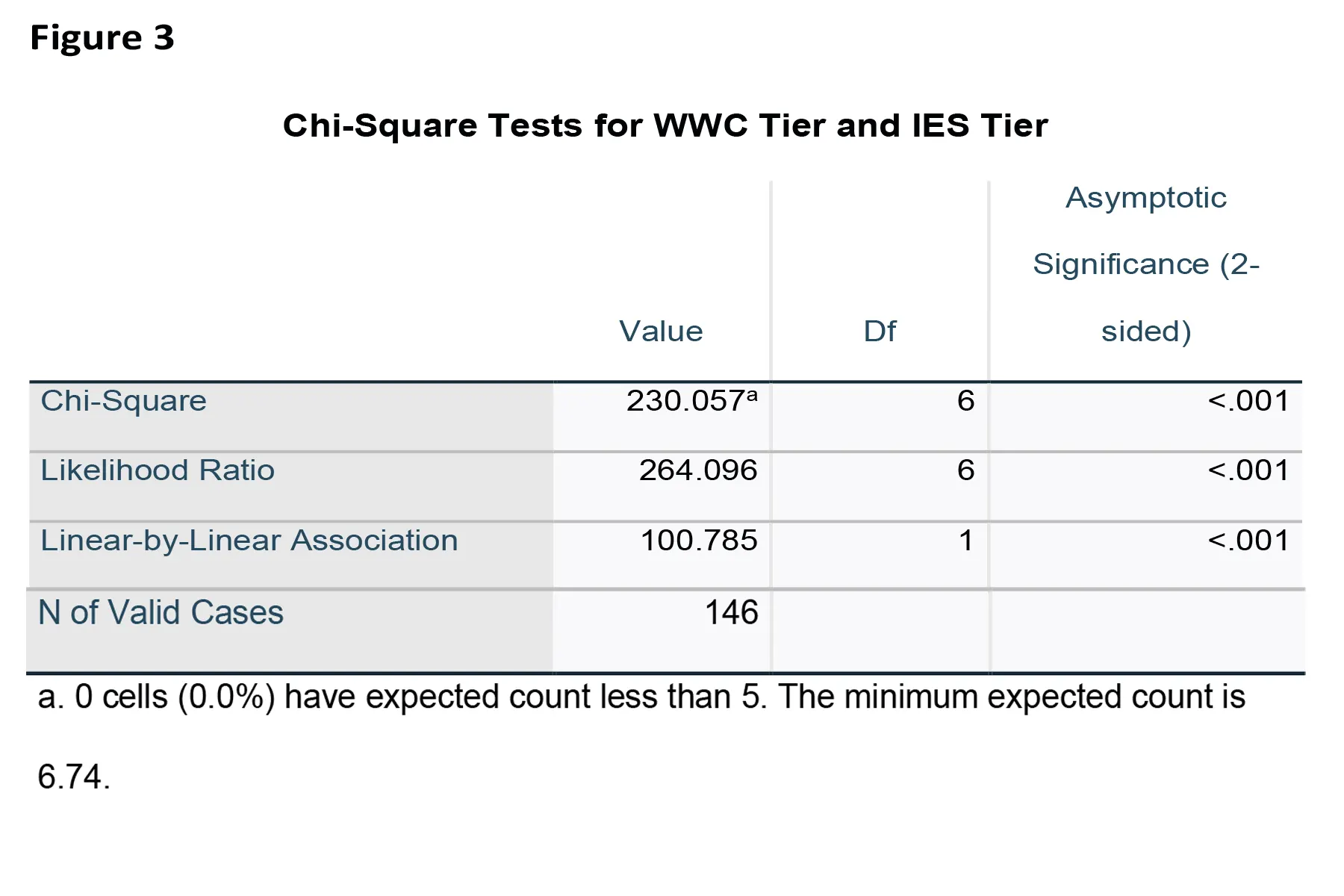

Statistical Relationship between the IES and ESSA Ranking Systems:

Not surprisingly, the statistical relationship between the scores assigned using the two ranking systems is quite high. This is depicted in figure 3 which reports on a number of measures of relationships between scores. Specifically, figure 3 reports on three types of analysis of relationships: a Chi-Square test of Independence, a likelihood ratio analysis, and an analysis of the linear-by-linear relationship. Each of these analyses is based on slightly different assumptions about the extent to which data are nested and the types of scales (i.e., categorical, ordinal, interval, ratio) that are involved. For this discussion the important point is that all three analyses deal with the extent to which the scores from the IES and the ESSA scales are similar. In all three cases, the test statistics were significant at the .001 level, indicating a strong relationship between the two metrics regardless of the assumptions about the nature of the scales and the data.

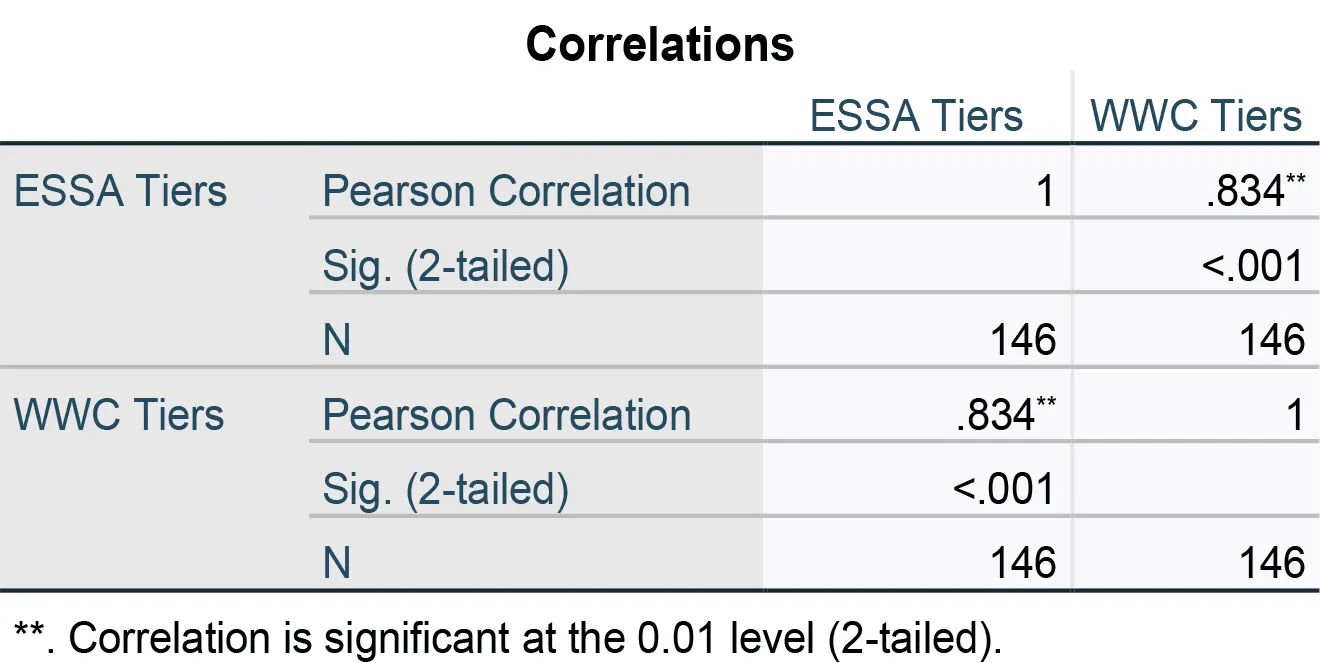

Figure 4 reports the analysis of relationships between the two scales using the Pearson correlation. The Pearson correlation examines the linear relationship between two variables and is generally employed with interval and ratio scales; however, it is probably the most common method of analyzing relationships between variables within the field of education.

Figure 4 indicates that the correlation between the IES and ESSA scales is .834 (r=.834, p<.001). This is considered a very large correlation within the social sciences (Cohen, 1988). One interpretation of this correlation is that the two scales have about 70% percent of their variance in common.

Descriptives:

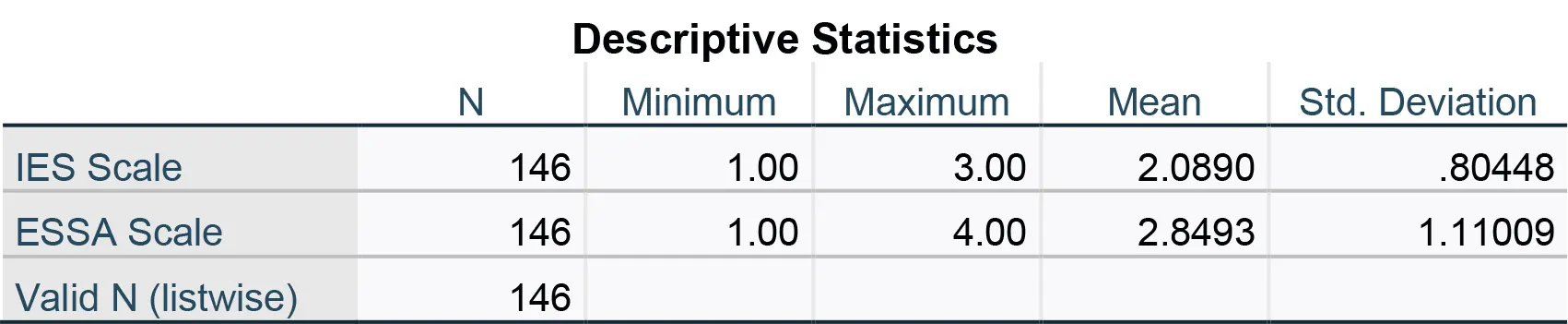

Finally, figure 5 reports some basic descriptive statistics regarding the two scales.

Figure 5 reports the mean and standard deviation for both the IES scale and the ESSA scales. The mean for the IES scale is 2.0890, and the mean for the ESSA scale is 2.8493. In this study, a score of 1.00 was considered the highest level of evidence for both scales. For example, a score of 1.00 would be “Strong” evidence in the IES scale and “Strong” in the ESSA ranking system. Thus, the central tendency of the scores for both scales is near the end of the scale indicating weak evidence.

Conclusions:

Taken as a whole, the findings of this study indicate that the IES and ESSA scales are highly related. Additionally, the findings indicate that the ratings relative to the levels of evidence for the 146 recommendations in the Practice Guides tend to be low.

References:

Cohen, J. (1988). Statistical power for the behavioral sciences. Hillsdale, NJ: Erlbaum.

REL Midwest. Regional Educational Laboratory at American Institute for Research .ESSA Tiers of Evidence. What you need to know. Arlington, VA: REL Midwest. Regional Educational Laboratory at American Institute for Research. (Retrieved. 3/28/2023).

Wood, T. W. (2017).Does the What Works Clearing House Really Work? Investigation into Issues of Policy, Practice, and Transparency: Eugene, Oregon: National Institute for Direct Instruction (NIFDA).